讨论热度最高的论文TOP 5

电子说

描述

国庆佳节第一天,举国同庆!出门旅游想必到处都是人山人海,不如在家里看看论文也是极好的!近日,机器学习顶会之一的ICLR2019投稿刚刚截止,本次大会投稿论文采用匿名公开的方式。本文整理了目前在国外社交网络和知乎上讨论热烈的一些论文,一起来看看!

首先我们来看看ICLR 2018,也就是去年的提交论文题目分布情况。如下图所示。热门关键词:强化学习、GAN、RIP等。

上图为ICLR 2019提交论文的分布情况,热门关键词:强化学习、GAN、元学习等等。可以看出比去年还是有些变化的。

投稿论文地址:

https://openreview.net/group?id=ICLR.cc/2019/Conference

在Google Colaboratory上可以找到关于ICLR 2019提交论文话题之间更加直观的可视化图。我们选择了上图中排名第三的话题“GAN”,图中由红色表示。可以看出,排名第三的GAN与表中多个话题有交集,如training、state、graph等。

讨论热度最高的论文TOP 5

1. LARGE SCALE GAN TRAINING FOR HIGH FIDELITY NATURAL IMAGE SYNTHESIS

最强GAN图像生成器,真假难辨

论文地址:

https://openreview.net/pdf?id=B1xsqj09Fm

更多样本地址:

https://drive.google.com/drive/folders/1lWC6XEPD0LT5KUnPXeve_kWeY-FxH002

第一篇就是这篇最佳BigGAN,DeepMind负责星际项目的Oriol Vinyals,说这篇论文带来了史上最佳的GAN生成图片,提升Inception Score 100分以上。

论文摘要:

尽管近期由于生成图像建模的研究进展,从复杂数据集例如 ImageNet 中生成高分辨率、多样性的样本仍然是很大的挑战。为此,研究者尝试在最大规模的数据集中训练生成对抗网络,并研究在这种规模的训练下的不稳定性。研究者发现应用垂直正则化(orthogonal regularization)到生成器可以使其服从简单的「截断技巧」(truncation trick),从而允许通过截断隐空间来精调样本保真度和多样性的权衡。这种修改方法可以让模型在类条件的图像合成中达到当前最佳性能。当在 128x128 分辨率的 ImageNet 上训练时,本文提出的模型—BigGAN—可以达到 166.3 的 Inception 分数(IS),以及 9.6 的 Frechet Inception 距离(FID),而之前的最佳 IS 和 FID 仅为 52.52 和 18.65。

BigGAN的生成器架构

生成样例,真是惟妙惟肖

2. Recurrent Experience Replay in Distributed Reinforcement Learning

分布式强化学习中的循环经验池

论文地址:

https://openreview.net/pdf?id=r1lyTjAqYX

Building on the recent successes of distributed training of RL agents, in this paper we investigate the training of RNN-based RL agents from experience replay. We investigate the effects of parameter lag resulting in representational drift and recurrent state staleness and empirically derive an improved training strategy. Using a single network architecture and fixed set of hyper-parameters, the resulting agent, Recurrent Replay Distributed DQN, triples the previous state of the art on Atari-57, and surpasses the state of the art on DMLab-30. R2D2 is the first agent to exceed human-level performance in 52 of the 57 Atari games.

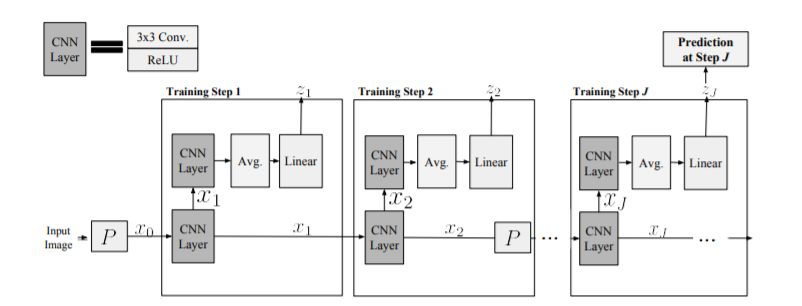

3. Shallow Learning For Deep Networks

深度神经网络的浅层学习

论文地址:

https://openreview.net/forum?id=r1Gsk3R9Fm

浅层监督的一层隐藏层神经网络具有许多有利的特性,使它们比深层对应物更容易解释,分析和优化,但缺乏表示能力。在这里,我们使用1-hiddenlayer学习问题逐层顺序构建深层网络,这可以从浅层网络继承属性。与之前使用浅网络的方法相反,我们关注的是深度学习被认为对成功至关重要的问题。因此,我们研究了两个大规模图像识别任务的CNN:ImageNet和CIFAR-10。使用一组简单的架构和训练想法,我们发现解决序列1隐藏层辅助问题导致CNN超过ImageNet上的AlexNet性能。通过解决2层和3层隐藏层辅助问题来扩展ourtraining方法以构建单个层,我们获得了一个11层网络,超过ImageNet上的VGG-11,获得了89.8%的前5个单一作物。据我们所知,这是CNN的端到端培训的第一个竞争性替代方案,可以扩展到ImageNet。我们进行了广泛的实验来研究它在中间层上引起的性质。

4. Relational Graph Attention Networks

关联性图注意力网络

论文地址:

https://openreview.net/forum?id=Bklzkh0qFm¬eId=HJxMHja3Y7

论文摘要:

In this paper we present Relational Graph Attention Networks, an extension of Graph Attention Networks to incorporate both node features and relational information into a masked attention mechanism, extending graph-based attention methods to a wider variety of problems, specifically, predicting the properties of molecules. We demonstrate that our attention mechanism gives competitive results on a molecular toxicity classification task (Tox21), enhancing the performance of its spectral-based convolutional equivalent. We also investigate the model on a series of transductive knowledge base completion tasks, where its performance is noticeably weaker. We provide insights as to why this may be, and suggest when it is appropriate to incorporate an attention layer into a graph architecture.

5. A Solution to China Competitive Poker Using Deep Learning

斗地主深度学习算法

论文地址:

https://openreview.net/forum?id=rJzoujRct7

论文摘要:

Recently, deep neural networks have achieved superhuman performance in various games such as Go, chess and Shogi. Compared to Go, China Competitive Poker, also known as Dou dizhu, is a type of imperfect information game, including hidden information, randomness, multi-agent cooperation and competition. It has become widespread and is now a national game in China. We introduce an approach to play China Competitive Poker using Convolutional Neural Network (CNN) to predict actions. This network is trained by supervised learning from human game records. Without any search, the network already beats the best AI program by a large margin, and also beats the best human amateur players in duplicate mode.

其他有意思的论文:

ICLR 2019 有什么值得关注的亮点?- 周博磊的回答 - 知乎

https://www.zhihu.com/question/296404213/answer/500575759

问句开头式:

Are adversarial examples inevitable?

Transfer Value or Policy? A Value-centric Framework Towards Transferrable Continuous Reinforcement Learning

How Important is a Neuron?

How Powerful are Graph Neural Networks?

Do Language Models Have Common Sense?

Is Wasserstein all you need?

哲理警句式:

Learning From the Experience of Others: Approximate Empirical Bayes in Neural Networks

In Your Pace: Learning the Right Example at the Right Time

Learning what you can do before doing anything

Like What You Like: Knowledge Distill via Neuron Selectivity Transfer

Don’s Settle for Average, Go for the Max: Fuzzy Sets and Max-Pooled Word Vectors

抖机灵式:

Look Ma, No GANs! Image Transformation with ModifAE

No Pressure! Addressing Problem of Local Minima in Manifold Learning

Backplay: 'Man muss immer umkehren'

Talk The Walk: Navigating Grids in New York City through Grounded Dialogue

Fatty and Skinny: A Joint Training Method of Watermark

A bird's eye view on coherence, and a worm's eye view on cohesion

Beyond Winning and Losing: Modeling Human Motivations and Behaviors with Vector-valued Inverse Reinforcement Learning

一句总结式:

ImageNet-trained CNNs are biased towards texture; increasing shape bias improves accuracy and robustness.

-

PSpice论文一---PSpice仿真中收敛问题的研究2011-12-28 0

-

关于对labviEW毕业论文征集的活动2012-04-06 0

-

智能硬件项目TOP10:要创业?先看你够不够格!2015-01-08 0

-

电赛仪器仪表类论文2017-08-09 0

-

【年度精选】2020年度top5榜单——单片机/MCU论坛讨论2021-01-21 0

-

【年度精选】2020年度TOP榜单——labview论坛讨论2021-01-26 0

-

【年度精选】2020年度TOP5榜单——测试测量技术论坛讨论2021-01-27 0

-

【年度精选】2020年度top5榜单——电路设计论坛讨论2021-01-28 0

-

宣传话题扩展尺寸和降低热度的汽车广播设计注意事项有哪些?2021-06-17 0

-

【年度精选】2021年度TOP榜单——STM32/STM8技术论坛讨论2022-01-05 0

-

【年度精选】2021年度top榜单——HarmonyOS技术社区讨论2022-01-14 0

-

逢山开路模型毕业论文2009-09-15 517

-

英伟达DGX Saturnv:世界最高能效比的TOP500超算2016-11-16 1437

-

最精彩的都在这!MWC讨论度最高的5款手机一次看!2017-03-01 659

-

嘉楠耘智采用台积电7nm的ASIC芯片 成为讨论度最高的“中国芯”2019-05-20 4212

全部0条评论

快来发表一下你的评论吧 !