请问怎样使用cephadm部署ceph集群呢?

描述

一、cephadm介绍

从红帽ceph5开始使用cephadm代替之前的ceph-ansible作为管理整个集群生命周期的工具,包括部署,管理,监控。

cephadm引导过程在单个节点(bootstrap节点)上创建一个小型存储集群,包括一个Ceph Monitor和一个Ceph Manager,以及任何所需的依赖项。

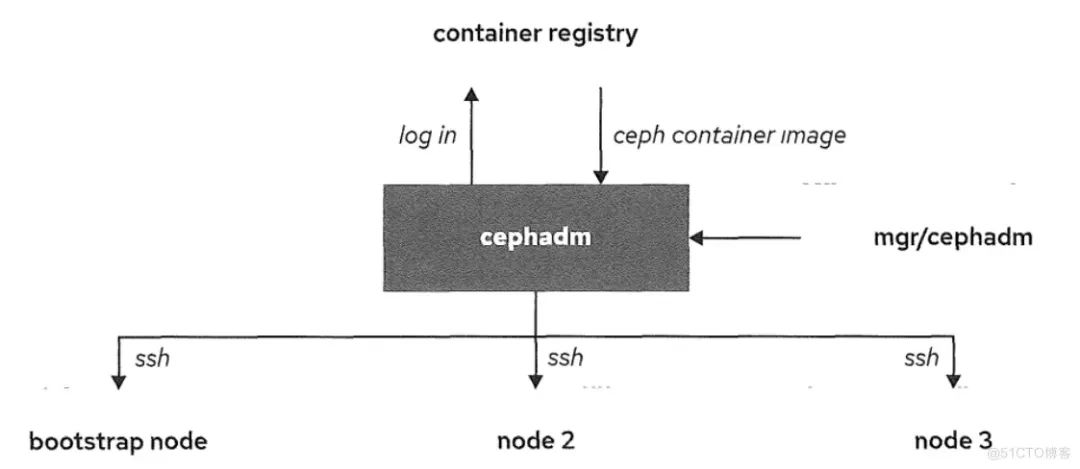

如下图所示:

cephadm可以登录到容器仓库来拉取ceph镜像和使用对应镜像来在对应ceph节点进行部署。ceph容器镜像对于部署ceph集群是必须的,因为被部署的ceph容器是基于那些镜像。

为了和ceph集群节点通信,cephadm使用ssh。通过使用ssh连接,cephadm可以向集群中添加主机,添加存储和监控那些主机。

该节点让集群up的软件包就是cepadm,podman或docker,python3和chrony。这个容器化的版本减少了ceph集群部署的复杂性和依赖性。

1、python3

yum -y install python3

2、podman或者docker来运行容器

# 安装阿里云提供的docker-ce

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

yum -y install docker-ce

systemctl enable docker --now

# 配置镜像加速器

mkdir -p /etc/docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://bp1bh1ga.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload

systemctl restart docker

3、时间同步(比如chrony或者NTP)

二、部署ceph集群前准备

2.1、节点准备

| 节点名称 | 系统 | IP地址 | ceph角色 | 硬盘 |

| node1 | Rocky Linux release 8.6 | 172.24.1.6 | mon,mgr,服务器端,管理节点 | /dev/vdb,/dev/vdc/,dev/vdd |

| node2 | Rocky Linux release 8.6 | 172.24.1.7 | mon,mgr | /dev/vdb,/dev/vdc/,dev/vdd |

| node3 | Rocky Linux release 8.6 | 172.24.1.8 | mon,mgr | /dev/vdb,/dev/vdc/,dev/vdd |

| node4 | Rocky Linux release 8.6 | 172.24.1.9 | 客户端,管理节点 |

2.2、修改每个节点的/etc/host

172.24.1.6 node1 172.24.1.7 node2 172.24.1.8 node3 172.24.1.9 node4

2.3、在node1节点上做免密登录

[root@node1 ~]# ssh-keygen [root@node1 ~]# ssh-copy-id root@node2 [root@node1 ~]# ssh-copy-id root@node3 [root@node1 ~]# ssh-copy-id root@node4

三、node1节点安装cephadm

1.安装epel源 [root@node1 ~]# yum -y install epel-release 2.安装ceph源 [root@node1 ~]# yum search release-ceph 上次元数据过期检查:014 前,执行于 2023年02月14日 星期二 14时22分00秒。 ================= 名称 匹配:release-ceph ============================================ centos-release-ceph-nautilus.noarch : Ceph Nautilus packages from the CentOS Storage SIG repository centos-release-ceph-octopus.noarch : Ceph Octopus packages from the CentOS Storage SIG repository centos-release-ceph-pacific.noarch : Ceph Pacific packages from the CentOS Storage SIG repository centos-release-ceph-quincy.noarch : Ceph Quincy packages from the CentOS Storage SIG repository [root@node1 ~]# yum -y install centos-release-ceph-pacific.noarch 3.安装cephadm [root@node1 ~]# yum -y install cephadm 4.安装ceph-common [root@node1 ~]# yum -y install ceph-common

四、其它节点安装docker-ce,python3

具体过程看标题一。

五、部署ceph集群

5.1、部署ceph集群,顺便把dashboard(图形控制界面)安装上

[root@node1 ~]# cephadm bootstrap --mon-ip 172.24.1.6 --allow-fqdn-hostname --initial-dashboard-user admin --initial-dashboard-password redhat --dashboard-password-noupdate

Verifying podman|docker is present...

Verifying lvm2 is present...

Verifying time synchronization is in place...

Unit chronyd.service is enabled and running

Repeating the final host check...

docker (/usr/bin/docker) is present

systemctl is present

lvcreate is present

Unit chronyd.service is enabled and running

Host looks OK

Cluster fsid: 0b565668-ace4-11ed-960c-5254000de7a0

Verifying IP 172.24.1.6 port 3300 ...

Verifying IP 172.24.1.6 port 6789 ...

Mon IP `172.24.1.6` is in CIDR network `172.24.1.0/24`

- internal network (--cluster-network) has not been provided, OSD replication will default to the public_network

Pulling container image quay.io/ceph/ceph:v16...

Ceph version: ceph version 16.2.11 (3cf40e2dca667f68c6ce3ff5cd94f01e711af894) pacific (stable)

Extracting ceph user uid/gid from container image...

Creating initial keys...

Creating initial monmap...

Creating mon...

Waiting for mon to start...

Waiting for mon...

mon is available

Assimilating anything we can from ceph.conf...

Generating new minimal ceph.conf...

Restarting the monitor...

Setting mon public_network to 172.24.1.0/24

Wrote config to /etc/ceph/ceph.conf

Wrote keyring to /etc/ceph/ceph.client.admin.keyring

Creating mgr...

Verifying port 9283 ...

Waiting for mgr to start...

Waiting for mgr...

mgr not available, waiting (1/15)...

mgr not available, waiting (2/15)...

mgr not available, waiting (3/15)...

mgr is available

Enabling cephadm module...

Waiting for the mgr to restart...

Waiting for mgr epoch 5...

mgr epoch 5 is available

Setting orchestrator backend to cephadm...

Generating ssh key...

Wrote public SSH key to /etc/ceph/ceph.pub

Adding key to root@localhost authorized_keys...

Adding host node1...

Deploying mon service with default placement...

Deploying mgr service with default placement...

Deploying crash service with default placement...

Deploying prometheus service with default placement...

Deploying grafana service with default placement...

Deploying node-exporter service with default placement...

Deploying alertmanager service with default placement...

Enabling the dashboard module...

Waiting for the mgr to restart...

Waiting for mgr epoch 9...

mgr epoch 9 is available

Generating a dashboard self-signed certificate...

Creating initial admin user...

Fetching dashboard port number...

Ceph Dashboard is now available at:

URL: https://node1.domain1.example.com:8443/

User: admin

Password: redhat

Enabling client.admin keyring and conf on hosts with "admin" label

You can access the Ceph CLI with:

sudo /usr/sbin/cephadm shell --fsid 0b565668-ace4-11ed-960c-5254000de7a0 -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring

Please consider enabling telemetry to help improve Ceph:

ceph telemetry on

For more information see:

https://docs.ceph.com/docs/pacific/mgr/telemetry/

Bootstrap complete.

5.2、把集群公钥复制到将成为集群成员的节点

[root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node2 [root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node3 [root@node1 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@node4

5.3、添加节点node2,node3,node4(各节点要先安装docker-ce,python3)

[root@node1 ~]# ceph orch host add node2 172.24.1.7 Added host 'node2' with addr '172.24.1.7' [root@node1 ~]# ceph orch host add node3 172.24.1.8 Added host 'node3' with addr '172.24.1.8' [root@node1 ~]# ceph orch host add node4 172.24.1.9 Added host 'node4' with addr '172.24.1.9'

5.4、给node1、node4打上管理员标签,拷贝ceph配置文件和keyring到node4

[root@node1 ~]# ceph orch host label add node1 _admin

Added label _admin to host node1

[root@node1 ~]# ceph orch host label add node4 _admin

Added label _admin to host node4

[root@node1 ~]# scp /etc/ceph/{*.conf,*.keyring} root@node4:/etc/ceph

[root@node1 ~]# ceph orch host ls

HOST ADDR LABELS STATUS

node1 172.24.1.6 _admin

node2 172.24.1.7

node3 172.24.1.8

node4 172.24.1.9 _admin

5.5、添加mon

[root@node1 ~]# ceph orch apply mon "node1,node2,node3" Scheduled mon update...

5.6、添加mgr

[root@node1 ~]# ceph orch apply mgr --placement="node1,node2,node3" Scheduled mgr update...

5.7、添加osd

[root@node1 ~]# ceph orch daemon add osd node1:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node1:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node1:/dev/vdd [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node2:/dev/vdd [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdb [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdc [root@node1 ~]# ceph orch daemon add osd node3:/dev/vdd 或者: [root@node1 ~]# for i in node1 node2 node3; do for j in vdb vdc vdd; do ceph orch daemon add osd $i:/dev/$j; done; done Created osd(s) 0 on host 'node1' Created osd(s) 1 on host 'node1' Created osd(s) 2 on host 'node1' Created osd(s) 3 on host 'node2' Created osd(s) 4 on host 'node2' Created osd(s) 5 on host 'node2' Created osd(s) 6 on host 'node3' Created osd(s) 7 on host 'node3' Created osd(s) 8 on host 'node3' [root@node1 ~]# ceph orch device ls HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS node1 /dev/vdb hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node1 /dev/vdc hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node1 /dev/vdd hdd 10.7G 4m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdb hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdc hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node2 /dev/vdd hdd 10.7G 3m ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdb hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdc hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked node3 /dev/vdd hdd 10.7G 90s ago Insufficient space (<10 extents) on vgs, LVM detected, locked

5.8、至此,ceph集群部署完毕!

[root@node1 ~]# ceph -s

cluster:

id: 0b565668-ace4-11ed-960c-5254000de7a0

health: HEALTH_OK

services:

mon: 3 daemons, quorum node1,node2,node3 (age 7m)

mgr: node1.cxtokn(active, since 14m), standbys: node2.heebcb, node3.fsrlxu

osd: 9 osds: 9 up (since 59s), 9 in (since 81s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 53 MiB used, 90 GiB / 90 GiB avail

pgs: 1 active+clean

5.9、node4节点管理ceph

# 在目录5.4已经将ceph配置文件和keyring拷贝到node4节点

[root@node4 ~]# ceph -s

-bash: ceph: 未找到命令,需要安装ceph-common

# 安装ceph源

[root@node4 ~]# yum -y install centos-release-ceph-pacific.noarch

# 安装ceph-common

[root@node4 ~]# yum -y install ceph-common

[root@node4 ~]# ceph -s

cluster:

id: 0b565668-ace4-11ed-960c-5254000de7a0

health: HEALTH_OK

services:

mon: 3 daemons, quorum node1,node2,node3 (age 7m)

mgr: node1.cxtokn(active, since 14m), standbys: node2.heebcb, node3.fsrlxu

osd: 9 osds: 9 up (since 59s), 9 in (since 81s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 53 MiB used, 90 GiB / 90 GiB avail

pgs: 1 active+clean

审核编辑:刘清

-

ceph-zabbix监控Ceph集群文件系统2022-04-26 751

-

autobuild-ceph远程部署Ceph及自动构建Ceph2022-05-05 636

-

Hadoop的集群环境部署说明2018-10-12 2631

-

Flink集群的部署方法2019-04-23 1847

-

如何在集群部署时实现分布式session?2019-07-17 1868

-

redis集群的如何部署2020-05-29 1626

-

Docker部署Redis服务器集群的方法2020-06-13 815

-

请问一下怎样在X86和RK3399pro去部署RKNN Toolkit呢2022-02-16 2302

-

请问鸿蒙系统上可以部署kubernetes集群吗?2022-06-08 1463

-

基于全HDD aarch64服务器的Ceph性能调优实践总结2022-07-05 3671

-

Ceph是什么?Ceph的统一存储方案简析2022-10-08 2095

-

Kubernetes的集群部署2023-02-15 2856

-

Ceph分布式存储简介&Ceph数据恢复流程2023-09-26 1804

-

Helm部署MinIO集群2023-12-03 1706

-

Ceph集群部署与运维完全指南2025-08-29 1531

全部0条评论

快来发表一下你的评论吧 !