从人工智能的角度看垃圾分类

电子说

描述

本月1日起,上海正式开始了“史上最严“垃圾分类的规定,扔错垃圾最高可罚200元。全国其它46个城市也要陆续步入垃圾分类新时代。各种被垃圾分类逼疯的段子在社交媒体上层出不穷。

其实从人工智能的角度看垃圾分类就是图像处理中图像分类任务的一种应用,而这在2012年以来的ImageNet图像分类任务的评比中,SENet模型以top-5测试集回归2.25%错误率的成绩可谓是技压群雄,堪称目前最强的图像分类器。

笔者刚刚还到SENet的创造者momenta公司的网站上看了一下,他们最新的方向已经是3D物体识别和标定了,效果如下:

可以说他们提出的SENet进行垃圾图像处理是完全没问题的。

Senet简介

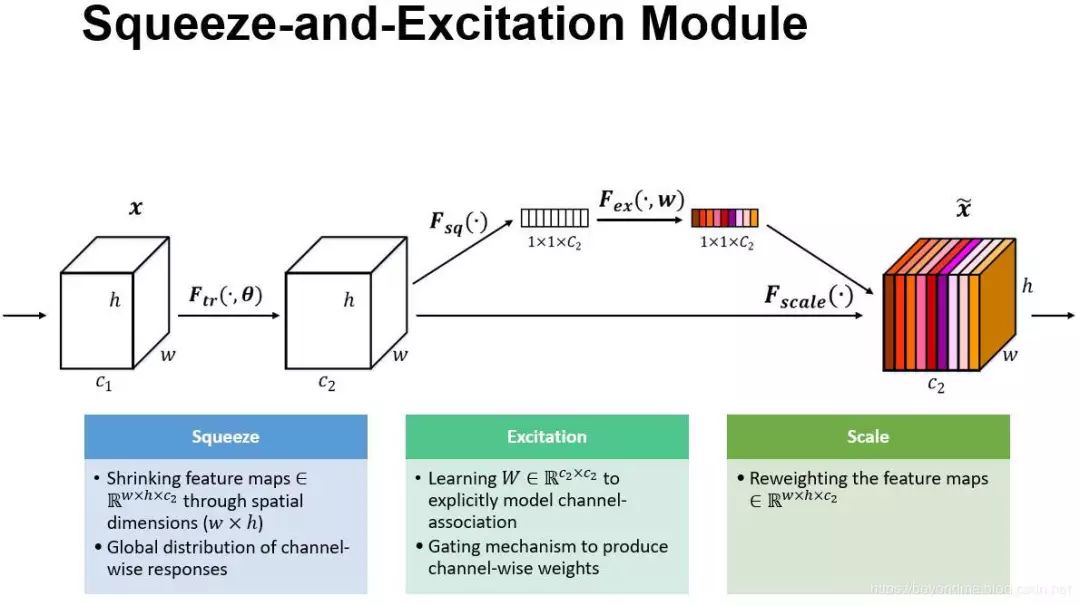

Senet的是由momenta和牛津大学共同提出的一种基于挤压(squeeze)和激励(Excitation)的模型,每个模块通过“挤压”操作嵌入来自全局感受野的信息,并且通过“激励”操作选择性地诱导响应增强。我们可以看到历年的ImageNet冠军基本都是在使用加大模型数量和连接数量的方式来提高精度,而Senet在这种”大力出奇迹”的潮流下明显是一股清流。其论文地址如下:http://openaccess.thecvf.com/content_cvpr_2018/papers/Hu_Squeeze-and-Excitation_Networks_CVPR_2018_paper.pdf

其具体原理说明如下:

Sequeeze:对 C×H×W 进行 global average pooling,得到 1×1×C 大小的特征图,这个特征图可以理解为具有全局感受野。翻译论文原文来说:将每个二维的特征通道变成一个实数,这个实数某种程度上具有全局的感受野,并且输出的维度和输入的特征通道数相匹配。它表征着在特征通道上响应的全局分布,而且使得靠近输入的层也可以获得全局的感受野。

Excitation :使用一个全连接神经网络,对 Sequeeze 之后的结果做一个非线性变换。它的机制一个类似于循环神经网络中的门。通过参数 w 来为每个特征通道生成权重,其中参数 w 被学习用来显式地建模特征通道间的相关性。

特征重标定:使用 Excitation 得到的结果作为权重,乘到输入特征上。将Excitation输出的权重可以认为是特征通道的重要性反应,逐通道加权到放到先前的特征上,完成对原始特征的重标定。

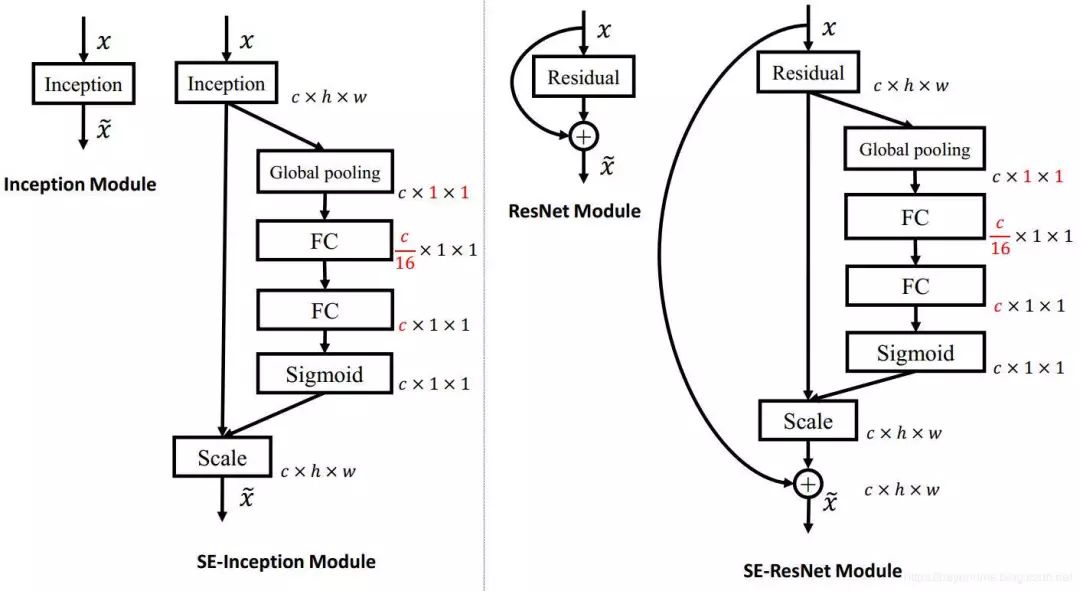

其模型架构如下:

SENet 构造非常简单,而且很容易被部署,不需要引入新的函数或者层。其caffe模型可以通过百度下载(https://pan.baidu.com/s/1o7HdfAE?errno=0&errmsg=Auth%20Login%20Sucess&&bduss=&ssnerror=0&traceid=)

Senet的运用

如果读者布署有caffe那么直接下载刚刚的模型直接load进来就可以使用了。如果没有装caffe而装了tensorflow也没关系,我们刚刚说了SENet没有引入新的函数和层,很方便用tensorflow实现。

下载图像集:经笔者各方查找发现了这个数据集,虽然不大也没有发挥出SENet的优势,不过也方便使用:

https://raw.githubusercontent.com/garythung/trashnet/master/data/dataset-resized.zip

建立SENet模型:使用tensorflow建立的模型在github上也有开源项目了,网址如下:https://github.com/taki0112/SENet-Tensorflow,只是他使用的是Cifar10数据集,不过这也没关系,只需要在gitclone以下将其cifar10.py中的prepare_data函数做如下修改即可。

1def prepare_data(): 2 print("======Loading data======") 3 download_data() 4 data_dir = 'e:/test/' 5 #data_dir = './cifar-10-batches-py'#改为你的文件侠 6 image_dim = image_size * image_size * img_channels 7 #meta = unpickle(data_dir + '/batches.meta')#本数据集不使用meta文件分类,故需要修改 8 label_names = ['cardboard','glass','metal','trash','paper','plastic'] 9 label_count = len(label_names)10 #train_files = ['data_batch_%d' % d for d in range(1, 6)]11 train_files = [data_dir+s for s in label_names]#改为12 train_data, train_labels = load_data(train_files, data_dir, label_count)13 test_data, test_labels = load_data(['test_batch'], data_dir, label_count)1415 print("Train data:", np.shape(train_data), np.shape(train_labels))16 print("Test data :", np.shape(test_data), np.shape(test_labels))17 print("======Load finished======")1819 print("======Shuffling data======")20 indices = np.random.permutation(len(train_data))21 train_data = train_data[indices]22 train_labels = train_labels[indices]23 print("======Prepare Finished======")2425 return train_data, train_labels, test_data, test_labels

其最主要的建模代码如下,其主要工作就是将SENet的模型结构实现一下即可:

1import tensorflow as tf 2from tflearn.layers.conv import global_avg_pool 3from tensorflow.contrib.layers import batch_norm, flatten 4from tensorflow.contrib.framework import arg_scope 5from cifar10 import * 6import numpy as np 7 8weight_decay = 0.0005 9momentum = 0.9 10 11init_learning_rate = 0.1 12 13reduction_ratio = 4 14 15batch_size = 128 16iteration = 391 17# 128 * 391 ~ 50,000 18 19test_iteration = 10 20 21total_epochs = 100 22 23def conv_layer(input, filter, kernel, stride=1, padding='SAME', layer_name="conv", activation=True): 24 with tf.name_scope(layer_name): 25 network = tf.layers.conv2d(inputs=input, use_bias=True, filters=filter, kernel_size=kernel, strides=stride, padding=padding) 26 if activation : 27 network = Relu(network) 28 return network 29 30def Fully_connected(x, units=class_num, layer_name='fully_connected') : 31 with tf.name_scope(layer_name) : 32 return tf.layers.dense(inputs=x, use_bias=True, units=units) 33 34def Relu(x): 35 return tf.nn.relu(x) 36 37def Sigmoid(x): 38 return tf.nn.sigmoid(x) 39 40def Global_Average_Pooling(x): 41 return global_avg_pool(x, name='Global_avg_pooling') 42 43def Max_pooling(x, pool_size=[3,3], stride=2, padding='VALID') : 44 return tf.layers.max_pooling2d(inputs=x, pool_size=pool_size, strides=stride, padding=padding) 45 46def Batch_Normalization(x, training, scope): 47 with arg_scope([batch_norm], 48 scope=scope, 49 updates_collections=None, 50 decay=0.9, 51 center=True, 52 scale=True, 53 zero_debias_moving_mean=True) : 54 return tf.cond(training, 55 lambda : batch_norm(inputs=x, is_training=training, reuse=None), 56 lambda : batch_norm(inputs=x, is_training=training, reuse=True)) 57 58def Concatenation(layers) : 59 return tf.concat(layers, axis=3) 60 61def Dropout(x, rate, training) : 62 return tf.layers.dropout(inputs=x, rate=rate, training=training) 63 64def Evaluate(sess): 65 test_acc = 0.0 66 test_loss = 0.0 67 test_pre_index = 0 68 add = 1000 69 70 for it in range(test_iteration): 71 test_batch_x = test_x[test_pre_index: test_pre_index + add] 72 test_batch_y = test_y[test_pre_index: test_pre_index + add] 73 test_pre_index = test_pre_index + add 74 75 test_feed_dict = { 76 x: test_batch_x, 77 label: test_batch_y, 78 learning_rate: epoch_learning_rate, 79 training_flag: False 80 } 81 82 loss_, acc_ = sess.run([cost, accuracy], feed_dict=test_feed_dict) 83 84 test_loss += loss_ 85 test_acc += acc_ 86 87 test_loss /= test_iteration # average loss 88 test_acc /= test_iteration # average accuracy 89 90 summary = tf.Summary(value=[tf.Summary.Value(tag='test_loss', simple_value=test_loss), 91 tf.Summary.Value(tag='test_accuracy', simple_value=test_acc)]) 92 93 return test_acc, test_loss, summary 94 95class SE_Inception_resnet_v2(): 96 def __init__(self, x, training): 97 self.training = training 98 self.model = self.Build_SEnet(x) 99100 def Stem(self, x, scope):101 with tf.name_scope(scope) :102 x = conv_layer(x, filter=32, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_conv1')103 x = conv_layer(x, filter=32, kernel=[3,3], padding='VALID', layer_name=scope+'_conv2')104 block_1 = conv_layer(x, filter=64, kernel=[3,3], layer_name=scope+'_conv3')105106 split_max_x = Max_pooling(block_1)107 split_conv_x = conv_layer(block_1, filter=96, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv1')108 x = Concatenation([split_max_x,split_conv_x])109110 split_conv_x1 = conv_layer(x, filter=64, kernel=[1,1], layer_name=scope+'_split_conv2')111 split_conv_x1 = conv_layer(split_conv_x1, filter=96, kernel=[3,3], padding='VALID', layer_name=scope+'_split_conv3')112113 split_conv_x2 = conv_layer(x, filter=64, kernel=[1,1], layer_name=scope+'_split_conv4')114 split_conv_x2 = conv_layer(split_conv_x2, filter=64, kernel=[7,1], layer_name=scope+'_split_conv5')115 split_conv_x2 = conv_layer(split_conv_x2, filter=64, kernel=[1,7], layer_name=scope+'_split_conv6')116 split_conv_x2 = conv_layer(split_conv_x2, filter=96, kernel=[3,3], padding='VALID', layer_name=scope+'_split_conv7')117118 x = Concatenation([split_conv_x1,split_conv_x2])119120 split_conv_x = conv_layer(x, filter=192, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv8')121 split_max_x = Max_pooling(x)122123 x = Concatenation([split_conv_x, split_max_x])124125 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')126 x = Relu(x)127128 return x129130 def Inception_resnet_A(self, x, scope):131 with tf.name_scope(scope) :132 init = x133134 split_conv_x1 = conv_layer(x, filter=32, kernel=[1,1], layer_name=scope+'_split_conv1')135136 split_conv_x2 = conv_layer(x, filter=32, kernel=[1,1], layer_name=scope+'_split_conv2')137 split_conv_x2 = conv_layer(split_conv_x2, filter=32, kernel=[3,3], layer_name=scope+'_split_conv3')138139 split_conv_x3 = conv_layer(x, filter=32, kernel=[1,1], layer_name=scope+'_split_conv4')140 split_conv_x3 = conv_layer(split_conv_x3, filter=48, kernel=[3,3], layer_name=scope+'_split_conv5')141 split_conv_x3 = conv_layer(split_conv_x3, filter=64, kernel=[3,3], layer_name=scope+'_split_conv6')142143 x = Concatenation([split_conv_x1,split_conv_x2,split_conv_x3])144 x = conv_layer(x, filter=384, kernel=[1,1], layer_name=scope+'_final_conv1', activation=False)145146 x = x*0.1147 x = init + x148149 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')150 x = Relu(x)151152 return x153154 def Inception_resnet_B(self, x, scope):155 with tf.name_scope(scope) :156 init = x157158 split_conv_x1 = conv_layer(x, filter=192, kernel=[1,1], layer_name=scope+'_split_conv1')159160 split_conv_x2 = conv_layer(x, filter=128, kernel=[1,1], layer_name=scope+'_split_conv2')161 split_conv_x2 = conv_layer(split_conv_x2, filter=160, kernel=[1,7], layer_name=scope+'_split_conv3')162 split_conv_x2 = conv_layer(split_conv_x2, filter=192, kernel=[7,1], layer_name=scope+'_split_conv4')163164 x = Concatenation([split_conv_x1, split_conv_x2])165 x = conv_layer(x, filter=1152, kernel=[1,1], layer_name=scope+'_final_conv1', activation=False)166 # 1154167 x = x * 0.1168 x = init + x169170 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')171 x = Relu(x)172173 return x174175 def Inception_resnet_C(self, x, scope):176 with tf.name_scope(scope) :177 init = x178179 split_conv_x1 = conv_layer(x, filter=192, kernel=[1,1], layer_name=scope+'_split_conv1')180181 split_conv_x2 = conv_layer(x, filter=192, kernel=[1, 1], layer_name=scope + '_split_conv2')182 split_conv_x2 = conv_layer(split_conv_x2, filter=224, kernel=[1, 3], layer_name=scope + '_split_conv3')183 split_conv_x2 = conv_layer(split_conv_x2, filter=256, kernel=[3, 1], layer_name=scope + '_split_conv4')184185 x = Concatenation([split_conv_x1,split_conv_x2])186 x = conv_layer(x, filter=2144, kernel=[1,1], layer_name=scope+'_final_conv2', activation=False)187 # 2048188 x = x * 0.1189 x = init + x190191 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')192 x = Relu(x)193194 return x195196 def Reduction_A(self, x, scope):197 with tf.name_scope(scope) :198 k = 256199 l = 256200 m = 384201 n = 384202203 split_max_x = Max_pooling(x)204205 split_conv_x1 = conv_layer(x, filter=n, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv1')206207 split_conv_x2 = conv_layer(x, filter=k, kernel=[1,1], layer_name=scope+'_split_conv2')208 split_conv_x2 = conv_layer(split_conv_x2, filter=l, kernel=[3,3], layer_name=scope+'_split_conv3')209 split_conv_x2 = conv_layer(split_conv_x2, filter=m, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv4')210211 x = Concatenation([split_max_x, split_conv_x1, split_conv_x2])212213 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')214 x = Relu(x)215216 return x217218 def Reduction_B(self, x, scope):219 with tf.name_scope(scope) :220 split_max_x = Max_pooling(x)221222 split_conv_x1 = conv_layer(x, filter=256, kernel=[1,1], layer_name=scope+'_split_conv1')223 split_conv_x1 = conv_layer(split_conv_x1, filter=384, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv2')224225 split_conv_x2 = conv_layer(x, filter=256, kernel=[1,1], layer_name=scope+'_split_conv3')226 split_conv_x2 = conv_layer(split_conv_x2, filter=288, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv4')227228 split_conv_x3 = conv_layer(x, filter=256, kernel=[1,1], layer_name=scope+'_split_conv5')229 split_conv_x3 = conv_layer(split_conv_x3, filter=288, kernel=[3,3], layer_name=scope+'_split_conv6')230 split_conv_x3 = conv_layer(split_conv_x3, filter=320, kernel=[3,3], stride=2, padding='VALID', layer_name=scope+'_split_conv7')231232 x = Concatenation([split_max_x, split_conv_x1, split_conv_x2, split_conv_x3])233234 x = Batch_Normalization(x, training=self.training, scope=scope+'_batch1')235 x = Relu(x)236237 return x238239 def Squeeze_excitation_layer(self, input_x, out_dim, ratio, layer_name):240 with tf.name_scope(layer_name) :241242243 squeeze = Global_Average_Pooling(input_x)244245 excitation = Fully_connected(squeeze, units=out_dim / ratio, layer_name=layer_name+'_fully_connected1')246 excitation = Relu(excitation)247 excitation = Fully_connected(excitation, units=out_dim, layer_name=layer_name+'_fully_connected2')248 excitation = Sigmoid(excitation)249250 excitation = tf.reshape(excitation, [-1,1,1,out_dim])251 scale = input_x * excitation252253 return scale254255 def Build_SEnet(self, input_x):256 input_x = tf.pad(input_x, [[0, 0], [32, 32], [32, 32], [0, 0]])257 # size 32 -> 96258 print(np.shape(input_x))259 # only cifar10 architecture260261 x = self.Stem(input_x, scope='stem')262263 for i in range(5) :264 x = self.Inception_resnet_A(x, scope='Inception_A'+str(i))265 channel = int(np.shape(x)[-1])266 x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_A'+str(i))267268 x = self.Reduction_A(x, scope='Reduction_A')269270 channel = int(np.shape(x)[-1])271 x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_A')272273 for i in range(10) :274 x = self.Inception_resnet_B(x, scope='Inception_B'+str(i))275 channel = int(np.shape(x)[-1])276 x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_B'+str(i))277278 x = self.Reduction_B(x, scope='Reduction_B')279280 channel = int(np.shape(x)[-1])281 x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_B')282283 for i in range(5) :284 x = self.Inception_resnet_C(x, scope='Inception_C'+str(i))285 channel = int(np.shape(x)[-1])286 x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_C'+str(i))287288289 # channel = int(np.shape(x)[-1])290 # x = self.Squeeze_excitation_layer(x, out_dim=channel, ratio=reduction_ratio, layer_name='SE_C')291292 x = Global_Average_Pooling(x)293 x = Dropout(x, rate=0.2, training=self.training)294 x = flatten(x)295296 x = Fully_connected(x, layer_name='final_fully_connected')297 return x298299300train_x, train_y, test_x, test_y = prepare_data()301train_x, test_x = color_preprocessing(train_x, test_x)302303304# image_size = 32, img_channels = 3, class_num = 10 in cifar10305x = tf.placeholder(tf.float32, shape=[None, image_size, image_size, img_channels])306label = tf.placeholder(tf.float32, shape=[None, class_num])307308training_flag = tf.placeholder(tf.bool)309310311learning_rate = tf.placeholder(tf.float32, name='learning_rate')312313logits = SE_Inception_resnet_v2(x, training=training_flag).model314cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=label, logits=logits))315316l2_loss = tf.add_n([tf.nn.l2_loss(var) for var in tf.trainable_variables()])317optimizer = tf.train.MomentumOptimizer(learning_rate=learning_rate, momentum=momentum, use_nesterov=True)318train = optimizer.minimize(cost + l2_loss * weight_decay)319320correct_prediction = tf.equal(tf.argmax(logits, 1), tf.argmax(label, 1))321accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))322323saver = tf.train.Saver(tf.global_variables())324325with tf.Session() as sess:326 ckpt = tf.train.get_checkpoint_state('./model')327 if ckpt and tf.train.checkpoint_exists(ckpt.model_checkpoint_path):328 saver.restore(sess, ckpt.model_checkpoint_path)329 else:330 sess.run(tf.global_variables_initializer())331332 summary_writer = tf.summary.FileWriter('./logs', sess.graph)333334 epoch_learning_rate = init_learning_rate335 for epoch in range(1, total_epochs + 1):336 if epoch % 30 == 0 :337 epoch_learning_rate = epoch_learning_rate / 10338339 pre_index = 0340 train_acc = 0.0341 train_loss = 0.0342343 for step in range(1, iteration + 1):344 if pre_index + batch_size < 50000:345 batch_x = train_x[pre_index: pre_index + batch_size]346 batch_y = train_y[pre_index: pre_index + batch_size]347 else:348 batch_x = train_x[pre_index:]349 batch_y = train_y[pre_index:]350351 batch_x = data_augmentation(batch_x)352353 train_feed_dict = {354 x: batch_x,355 label: batch_y,356 learning_rate: epoch_learning_rate,357 training_flag: True358 }359360 _, batch_loss = sess.run([train, cost], feed_dict=train_feed_dict)361 batch_acc = accuracy.eval(feed_dict=train_feed_dict)362363 train_loss += batch_loss364 train_acc += batch_acc365 pre_index += batch_size366367368 train_loss /= iteration # average loss369 train_acc /= iteration # average accuracy370371 train_summary = tf.Summary(value=[tf.Summary.Value(tag='train_loss', simple_value=train_loss),372 tf.Summary.Value(tag='train_accuracy', simple_value=train_acc)])373374 test_acc, test_loss, test_summary = Evaluate(sess)375376 summary_writer.add_summary(summary=train_summary, global_step=epoch)377 summary_writer.add_summary(summary=test_summary, global_step=epoch)378 summary_writer.flush()379380 line = "epoch: %d/%d, train_loss: %.4f, train_acc: %.4f, test_loss: %.4f, test_acc: %.4f " % (381 epoch, total_epochs, train_loss, train_acc, test_loss, test_acc)382 print(line)383384 with open('logs.txt', 'a') as f:385 f.write(line)386387 saver.save(sess=sess, save_path='./model/Inception_resnet_v2.ckpt')

其实使用SENet做垃圾分类真是大才小用了,不过大家也可以感受一下他的实力强大。

-

人工智能是什么?2015-09-16 6435

-

【下载】《人工智能标准化白皮书(2018版)》2018-02-02 24033

-

人工智能就业前景2018-03-29 8426

-

解读人工智能的未来2018-11-14 4915

-

人工智能医生未来或上线,人工智能医疗市场规模持续增长2019-02-24 5883

-

人工智能上路需要知道什么常识2019-05-13 4154

-

人工智能:超越炒作2019-05-29 5031

-

人工智能分类垃圾桶原理2021-07-21 2179

-

人工智能芯片是人工智能发展的2021-07-27 6694

-

物联网人工智能是什么?2021-09-09 5344

-

《移动终端人工智能技术与应用开发》人工智能的发展与AI技术的进步2023-02-17 2372

-

人工智能垃圾分类机在山东青岛投入使用2019-01-10 4190

-

当垃圾分类遇到人工智能 “玩中学”令人体验更深2019-07-03 466

-

哪种人工智能更擅长垃圾分类2019-11-06 2176

-

人工智能是如何将垃圾分类的2020-03-01 5384

全部0条评论

快来发表一下你的评论吧 !